|

CalibRGBD Calibration RGB-D based on spheres Repository RFAI - Laboratoire d'Informatique Fondamentale et Appliquée de Tours (EA 6300) - France |

|

|

|

|

|

|---|---|---|---|

|

|

|

|

Repository description

Repository description

The Sphere RGB-D Calibration (Version 2019_03) benchmark is organized as follows:

The goal of this benchmark is to evaluate RGB-D calibration methods based on spheres using the same dataset. We propose several metrics to evaluate the calibration results, as well as the results provided by three algorithms.

RGB-D Images Pairs

RGB Intrinsic Parameters Facultative]-->B[RGB-D Calibration] B-->C[Output

Extrinsic Parameters

Depth Intrinsic Parameters Facultative]

The following details the capture environment, the architecture and the evaluations metrics. For more details, refers to the dataset paper [1] :

DOWNLOAD ARTICLE

To download the datasets, click on the dataset names in the table below :

| Dataset | Color Camera Resolution |

Depth Camera Resolution |

# RGB-D Pairs | Support | Capture setup |

Known Parameters |

|---|---|---|---|---|---|---|

| Random | Hololens n°1 1280x720 |

Structure Sensor 640x480 |

72 | Single Sphere Support |

Stationary | Intrinsic RGB Intrinsic Depth |

| Equidistant | Hololens n°2 1280x720 |

Structure Sensor 640x480 |

27 | Single Sphere Support |

Stationary | Intrinsic RGB Intrinsic Depth |

| Double | Realsense SR300 1920x1080 |

Realsense SR300 640x480 |

40 | Double Sphere Support |

Hand-held | Intrinsic RGB Intrinsic Depth Extrinsic RGB-D |

| Synthetical | 1280x960 | - | 20 | Single Sphere | - | All (Absolute Values) |

The source code to perform an RGB-D calibration with these files is not available.

Capture environment

Capture environment

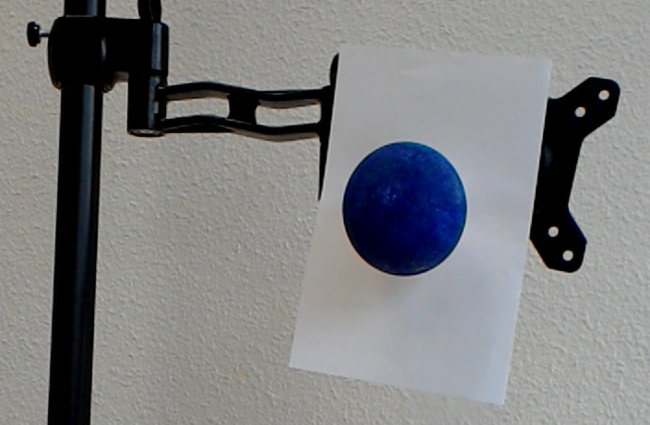

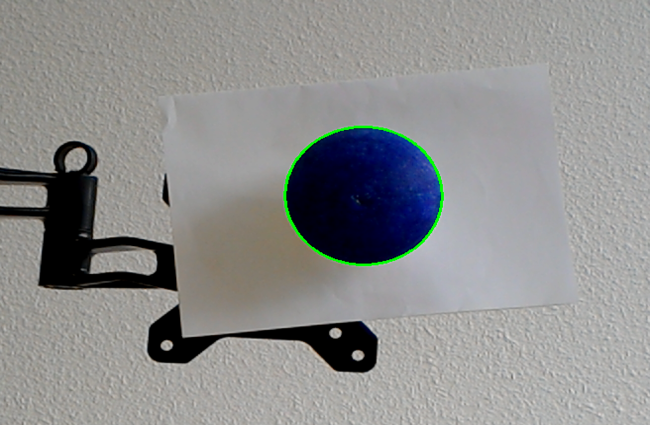

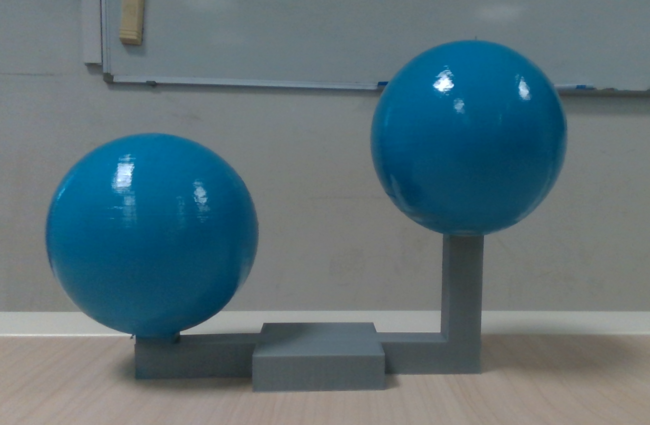

These datasets are taken in the following environment :

- Fixed Sphere

- Colored Sphere

- Known sphere radius

- Temporaly distinct frames

- The sphere moves in most of the cameras field of view

- Good luminosity conditions

- Manufacturer RGB and Depth Intrinsic parameters known

Dataset tree

Dataset tree

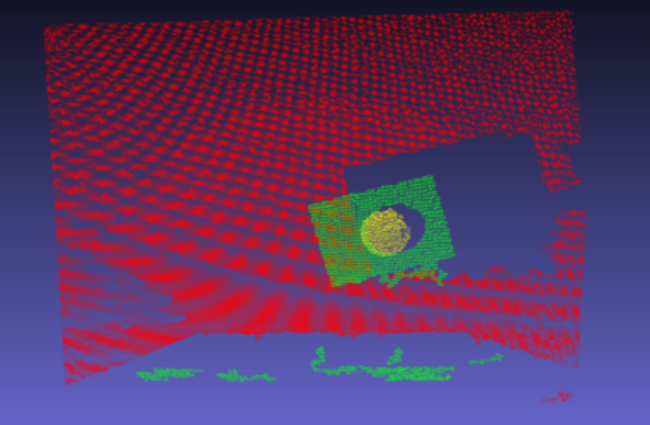

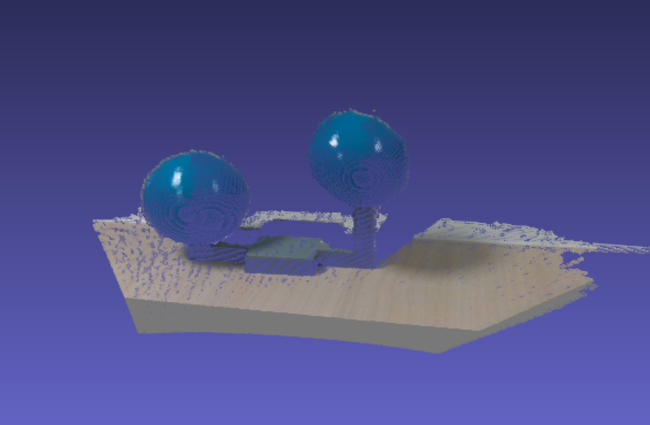

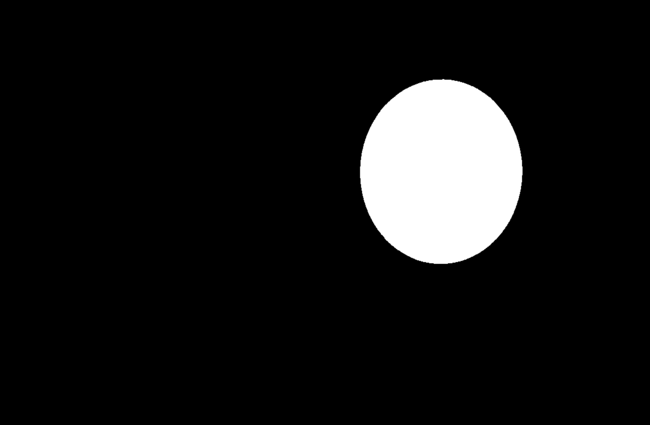

In this dataset, images are stored as .PNG, points clouds as .PLY, and configuration files as .YML.

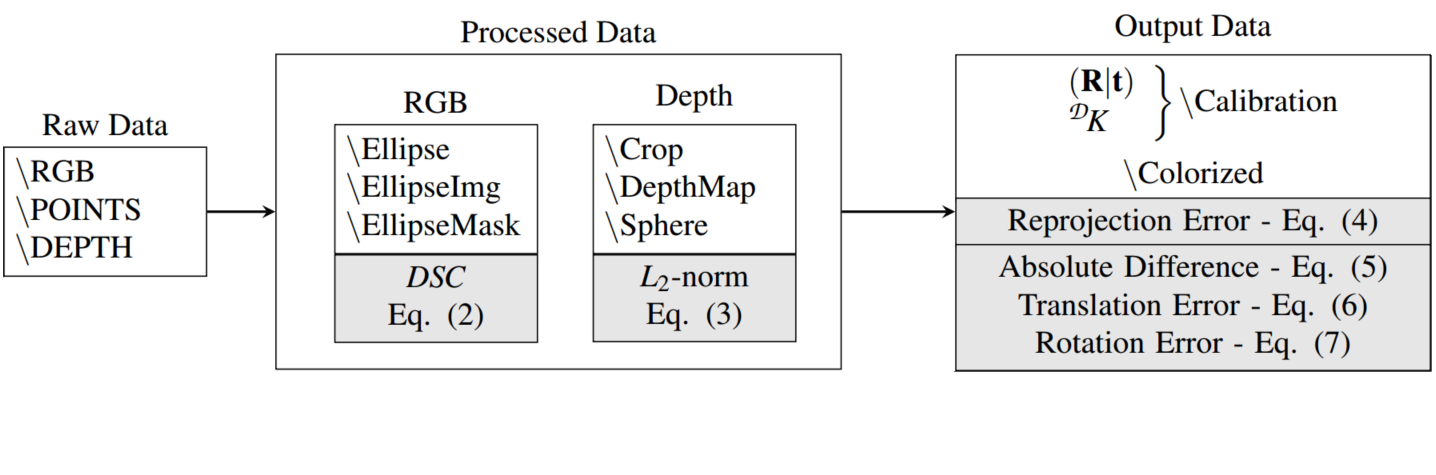

A dataset is organized the same way either it contains real or synthetical data. Linked data have the same name. RGB-D input data, as captured by the RGB-D camera couple is stored in the RGB, POINTS and DEPTH folders. The folder Processed contains pre-computed data, such as the segmented ellipses and point cloud. The folder OUTPUT contains calibration results from several algorithms.

Note that for some dataset, some folders are not provided, either because data was not available, or because it does not make sense (e.g. the folder EllipseImg for synthetical data).

Figure 2: Overview of a dataset organization, and their respective metrics (in light gray) for evaluation of the RGB-D Calibration Process.

Synthetical data

Synthetical data

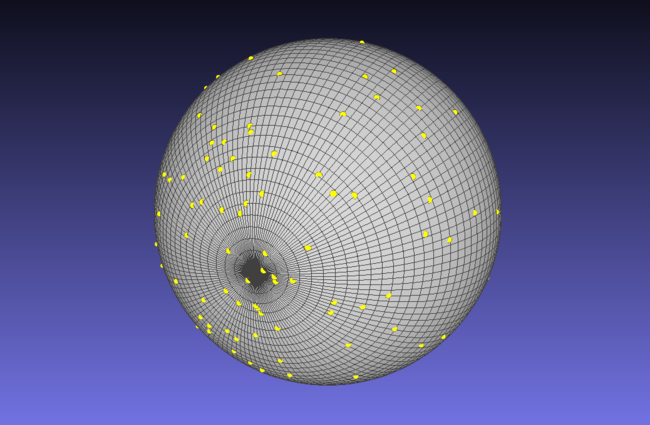

In order to evaluate precisely the influence of noise on the calibration result (especially the Extrinsic parameters), a synthetical dataset, with known values, has been constructed. A GroundTruth scene has been made. We applied 3 types of noise, as shown on Figure 4.

1 - Gaussian Noise on the RGB ellipses points

2 - Noise on the RGB camera Intrinsic parameters (we multiply each value by a scalar)

3 - Noise on the Depth data, as a shift of all the point cloud (which displace the point cloud centroid)

As we apply Gaussian Noise, we performed multiple iterations to study the distribution of the resulting values

Figure 3: Overview of a synthetic dataset generation

In the synthetical data, we only modify the Depth Noise between the multiple evaluations (noise n°3), by increasing its amount by 0,4. The noise values are provided in the /noise.yml. All steps are performed a hundred times to have reliable results. Because of the amount of synthetical datas, the Ground Truth scene (/GroundTruth), as well as the results (/results) are provided in separate folders.

How to use a dataset

How to use a dataset

To perform a calibration, you can either use the cameras frames (/root), or the ellipse and sphere detection (/Processed). The calibration results have to be stored in the /OUTPUT folder.

To read our multiples files, we recommand the use of the OpenCV library.

How to : Read a Ellipse File

We recommend the use of the "cv::RotatedRect" type as output of this file Parameters :

x(double) : x coordinate of the ellipse centery(double) : y coordinate of the ellipse centermajorAxis(double) : major axis of the ellipseminorAxis(double) : minor axis of the ellipseangle(double) : rotation angle (in degree) of the ellipse

How to : Read a Sphere File

x(double) : x coordinate of the sphere centery(double) : y coordinate of the sphere centerz(double) : z coordinate of the sphere centeru(double) : x coordinate of the projected sphere center onto the depth camera plane (with manufacturer intrinsic parameters)v(double) : y coordinate of the projected sphere center onto the depth camera plane (with manufacturer intrinsic parameters)Radius(double) : radius of the fitted sphererms(double) : resulting error of the fitting algorithm (Least-Squares)

How to : Read a config.yml File

sphereRadius(double) : radius of the used sphererelativeSphereDistance(double) : Distance between the two spheres (for Double dataset only)rgbIntrinsic(Mat3x3[double]): RGB camera intrinsic parametersdepthIntrinsicManufacturer(Mat3x3[double]) : Depth camera intrinsic parametersrgbDistCoeffs(Mat5x1[double]) : RGB camera distortion coefficientsdepthDistCoeffs(Mat5x1[double]) : RGB camera distortion coefficients

How to : Read a Calibration.yml File

fileKeys(array[string]) : File used in the calibrationtranslation(Mat3x1[double]) : Resulting translationrotation_degree(Mat3x1[double]) : Resulting rotationdepthIntrinsic(Mat3x3[double]): Resulting Depth camera intrinsic parametersrgbIntrinsic(Mat3x3[double]) : RGB camera intrinsic parametersmeanReprojectionError(double) : Mean of the reprojection error valuesreprojectionError(array[double]): Reprojection error values

How to : Read a detectionEvaluation.csv File

This file evaluate the ellipse and sphere detection against the manually segmented ground truth.

index: File name to evaluateDSC: Sorensen-Dice coefficient for the ellipse detection evaluation (see Equation 2)L2: L2-norm for the sphere detection evaluation (see Equation 3)

How to : Read a noise.yml File (synthetic data only)

SigmaRGB: Gaussian Noise on the RGB ellipses points (1)SigmaKr: Noise on the RGB camera Intrinsic parameters (2)SigmaDepth: Noise on the Depth data (3)

How to : Read a CalibrationResults file (synthetic data only)

e_r: Reprojection error (see Equation 4)E_r: 3D point-to-point mean distance (see Equation 5)R_err: Rigid body rotation error (see Equation 8)t_err: Rigid body translation error (see Equation 7)K_diff: Fu_diff + Fv_diff + u0_diff + v0_diff + s_diff (for each *_diff variable, see Equation 6)Fu_diff: Absolute difference of the focal expressed in pixel width (px)Fv_diff: Absolute difference of the focal expressed in pixel height (px)u0_diff: Absolute difference of the u0 coordinate of the principal point (u0, v0) (px)v0_diff: Absolute difference of the v0 coordinate of the principal point (u0, v0) (px)s_diff: Absolute difference of the affine parameter (px)

TR_diff: R_diff + t_diffR_diff: Rx_diff + Ry_diff + Rz_diffRx_diff: Absolute difference of the rotation around the x axis (deg.)Ry_diff: Absolute difference of the rotation around the y axis (deg.)Rz_diff:Absolute difference of the rotation around the z axis (deg.)

t_diff: tx_diff + ty_diff + tz_difftx_diff: Absolute difference of the translation along the x axis (mm)ty_diff: Absolute difference of the translation along the y axis (mm)ty_diff: Absolute difference of the translation along the z axis (mm)

Currently evaluated algorithms

Currently evaluated algorithms

- Direct Linear Transform (DLT) [2]

- Staranowicz et al. [3] (based on DLT for initialization)

- Boas et al. [4] (based on DLT for initialization)

References

References

[1] D. J. T. Boas, S. Poltaretskyi, J.-Y. Ramel, J. Chaoui, J. Berhouet, and M. Slimane, "A Benchmark Dataset for RGB-D Sphere-Based Calibration" - PDF

[2] R. Hartley and A. Zisserman, Multiple View Geometry in Computer Vision. Cambridge University Press, ISBN:0521540518, second ed., 2004

[3] A. Staranowicz, G. R. Brown, F. Morbidi, and G. L. Mariottini, “Easy-to-Use and Accurate Calibration of RGBD Cameras from Spheres,” in Image and Video Technology, vol. 8333, pp. 265–278, Springer Berlin Heidelberg, 2014

[4] D. J. T. Boas, S. Poltaretskyi, J.-Y. Ramel, J. Chaoui, J. Berhouet, and M. Slimane, “Relative pose improvement of sphere based rgb-d calibration,” Proceedings of the 14th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications, vol. 4, pp. 91–98, 2019

Downloading and condition of use

Downloading and condition of use

-

This database is publicly available and free for research staff.

-

Also, please inform us about your work and, if possible, send a copy of your publication to the contacts mentioned below. We will eventually put a list of references on this web page.

-

Limitation of Liability :

-

The images contained in this database are provided 'as is' without warranty of any kind. The entire risk is assumed by the user, and in no event will RFAI be liable for any consequential incidental or direct damages suffered in the course of using the database.

-

Permission to use but not reproduce or distribute the database is granted to all researchers given that the following steps are properly followed :

-

Permission is NOT granted to reproduce the database or posted into any other webpage.

-

None economical profit can be obtained from this database.

-

Contacts

Contacts

Technical questions about the database, the format, or problems obtaining the data should be directed to the database editors:

- David Boas, "boas.dav@gmail.com"

- Jean-Yves Ramel, "jean-yves.ramel@univ-tours.fr"

- Mohamed Slimane, "mohamed.slimane@univ-tours.fr"

Lab./Dep .Informatique de Tours - PolytechTours

64, Av. Jean Portalis

37200 TOURS - FRANCE

Tel: +33 2.47.36.14.26